Executive Brief April 2026:

Tracking the Right Data for Texas SST Accountability

As districts navigate the transition to Texas SST Accountability, many are discovering a disconnect between adaptive growth signals and the static, grade-level mastery required for a high rating. This executive brief explores how to move beyond predictive traps to secure your district's future.

Executive Summary: The Adaptive Disconnect

Across Texas, districts are seeing encouraging mid-year signals from adaptive diagnostics, yet end-of-year accountability results often tell a different story. As the state transitions to the Student Success Tool (SST) in 2027-2028, district leaders must ensure their through-year signals reliably match the rigid, criterion-referenced expectations of the TEA.

Leadership Insight: You cannot adequately prepare students for a static, grade-level mastery assessment if their only formal testing windows actively adjust away from grade-level rigor the moment students struggle.

I. The "Adaptive Growth Trap"

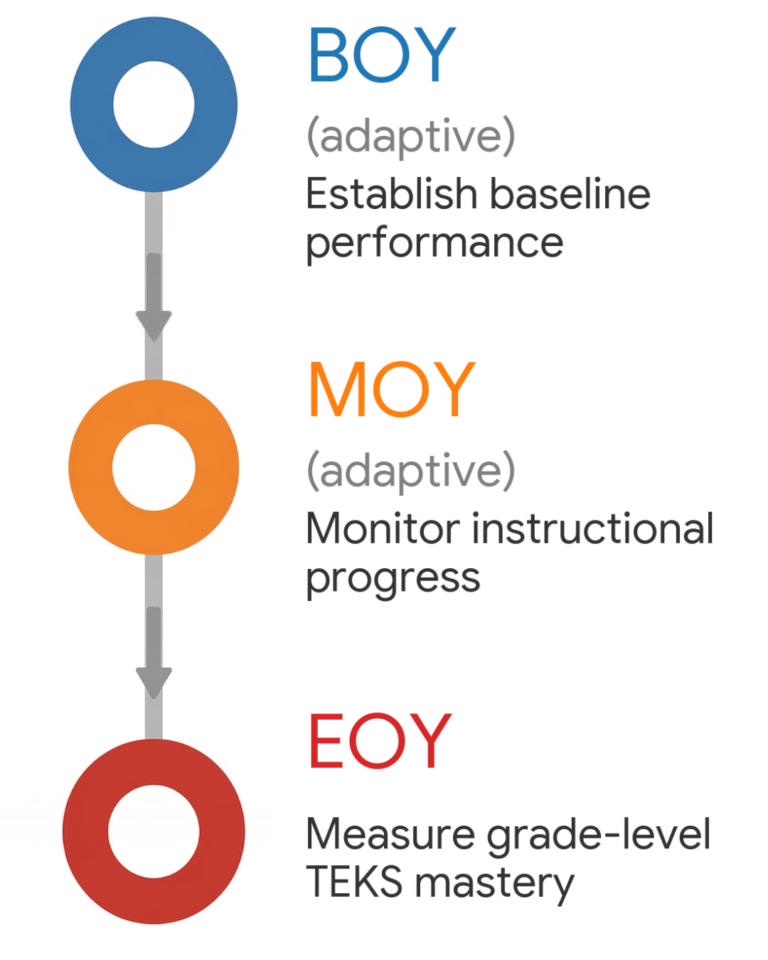

The new SST model introduces a fundamental disconnect. The Beginning-of-Year (BOY) and Middle-of-Year (MOY) assessments are adaptive diagnostics, while the End-of-Year (EOY) assessment is TEKS criterion-referenced.

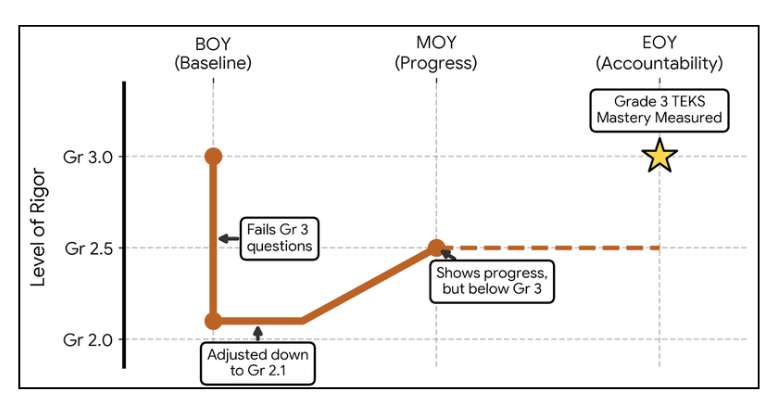

- The Performance Cliff: Adaptive assessments lower difficulty to find a student’s comfort level.

The Illusion of Progress: A student can show meaningful improvement on a below-grade trajectory while remaining below the rigor required for the final EOY measurement.

Accountability Risk: Relying only on adaptive data can lead to a “Performance Cliff” that negatively impacts final accountability ratings.

Leadership Insight: State BOY and MOY assessments may predict outcomes based on off-grade growth trajectories while hiding the specific questions students miss. You cannot reteach a statistical prediction.

II. The "Predictive" Trap of Modern Dashboards

When students complete adaptive assessments on platforms like Cambium™, the system relies on Item Response Theory (IRT) rather than direct TEKS mastery. These dashboards often omit the data teachers need most:

No Item-Level Visibility: Teachers cannot see the specific questions missed because the item bank is secured.

No Granular TEKS Data: If a student levels down to lower-grade questions, the system cannot confirm if they mastered grade-level Readiness standards.

The “Predicted Meets” Illusion: Students may be flagged as “On Track” simply because they are growing steadily on a below-grade trajectory.

III. Why Grade-Level TEKS Mastery is What Counts

The Texas SST Accountability system evaluates districts using three distinct domains: Student Achievement, School Progress, and Closing the Gaps. Because these domains rely on year-to-year comparisons of criterion-referenced assessments, success depends entirely on students demonstrating grade-level mastery—not off-grade adaptive growth.

Leadership Insight: You cannot adequately prepare students for a static, grade-level mastery assessment if their only formal testing windows actively adjust away from grade-level rigor the moment students struggle.

IV. The Solution: Daily Instructional Intelligence

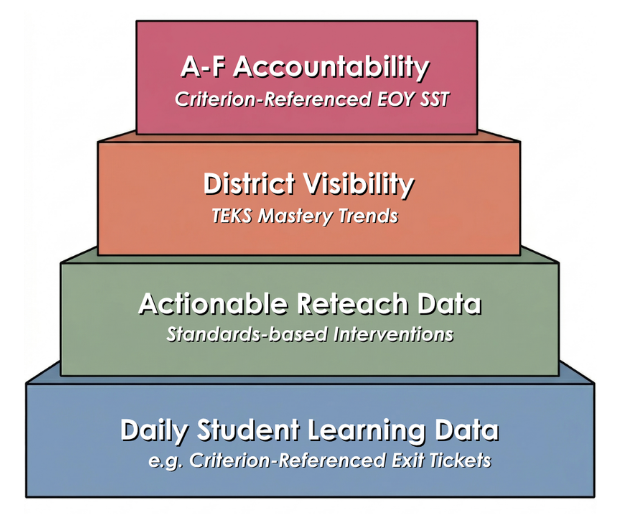

If benchmark testing is prohibited and adaptive diagnostics are unreliable for accountability, where is the “right” data? The answer lies in daily classroom evidence, which is fundamentally criterion-referenced.

By using Classwork.com™ to digitize daily work—such as Bluebonnet™ Learning exit tickets—districts can escape the adaptive trap:

Daily Criterion-Referenced Mastery: Exit tickets measure specific, lesson-level understanding against state standards.

Actionable Reteach Data: Teachers receive exact item-level data to plan immediate reteach sessions.

Preserving IMRA Funds: Districts can avoid draining their $40 IMRA incentive on misaligned adaptive software by digitizing existing high-quality instructional materials (HQIM).

Leadership Insight: Capturing daily instructional TEKS data eliminates the need for additional testing. It organically supports the teacher's lesson while generating the exact criterion-referenced mastery data needed to succeed on final state accountability tests.

Strategic Takeaway

The districts that succeed in the SST era will not rely on adaptive growth signals; they will ensure that daily instruction produces clear, comparable evidence of grade-level TEKS mastery that is visible across the district.

Related Leadership Insights

Discover why Classroom Data is more predictive of success than state diagnostics.

Addressing Implementation Pain Points for Bluebonnet Math™.

Strategic advice on Digital-Only Adoptions for Texas HQIM.

What is the Student Success Tool (SST)? The SST is the new Texas assessment model transitioning in 2027-2028. It replaces the traditional testing structure with three distinct windows: an adaptive BOY to establish a baseline, an adaptive MOY to monitor instructional progress, and a criterion-referenced EOY to measure grade-level TEKS mastery.

Why is there a “Performance Cliff” in the new model? A “Performance Cliff” occurs because BOY and MOY assessments are adaptive—meaning they lower the difficulty level if a student struggles. Because the EOY assessment is static and criterion-referenced, students may show progress on an off-grade-level trajectory all year, only to fail when they encounter the full rigor of grade-level TEKS in April.

Do adaptive growth signals count toward A-F Accountability ratings? No. While adaptive BOY and MOY assessments support instructional decision-making, the Texas Education Agency (TEA) does not use them to determine official accountability ratings. Ratings depend entirely on year-to-year comparisons of static, criterion-referenced EOY outcomes across Domains 1, 2, and 3.

Why can’t I use Cambium™ or Zearn™ data for reteaching? Adaptive systems often hide the specific items students miss to secure their item banks. Furthermore, because these tests adjust away from grade-level rigor, they cannot tell you if a student mastered specific grade-level standards (like Readiness TEKS 3.4A) if the student never saw those questions. You cannot effectively reteach a statistical prediction or a “growth streak”.

How can districts monitor TEKS mastery without over-testing? The most effective way is to digitize daily classroom evidence, such as Bluebonnet™ Learning exit tickets. Because this work is already aligned with state standards, capturing this data through Classwork.com™ provides real-time, criterion-referenced visibility into student mastery without the need for additional benchmark testing.

How does this impact IMRA funds? Many districts currently spend their $40 IMRA (Instructional Materials Refresh Allowance) incentive on adaptive software that is misaligned with state accountability. By digitizing existing high-quality instructional materials (HQIM) instead, districts preserve those funds while gaining the exact criterion-referenced data required for EOY success.